DCaH – Dead?

Hi ! 🙂

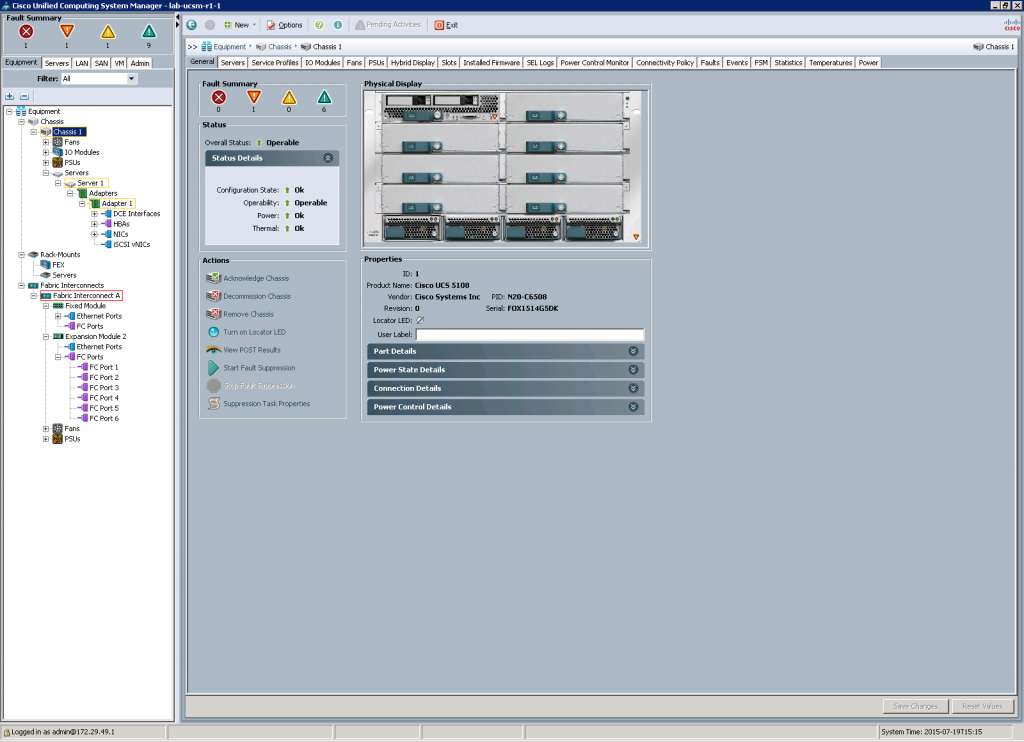

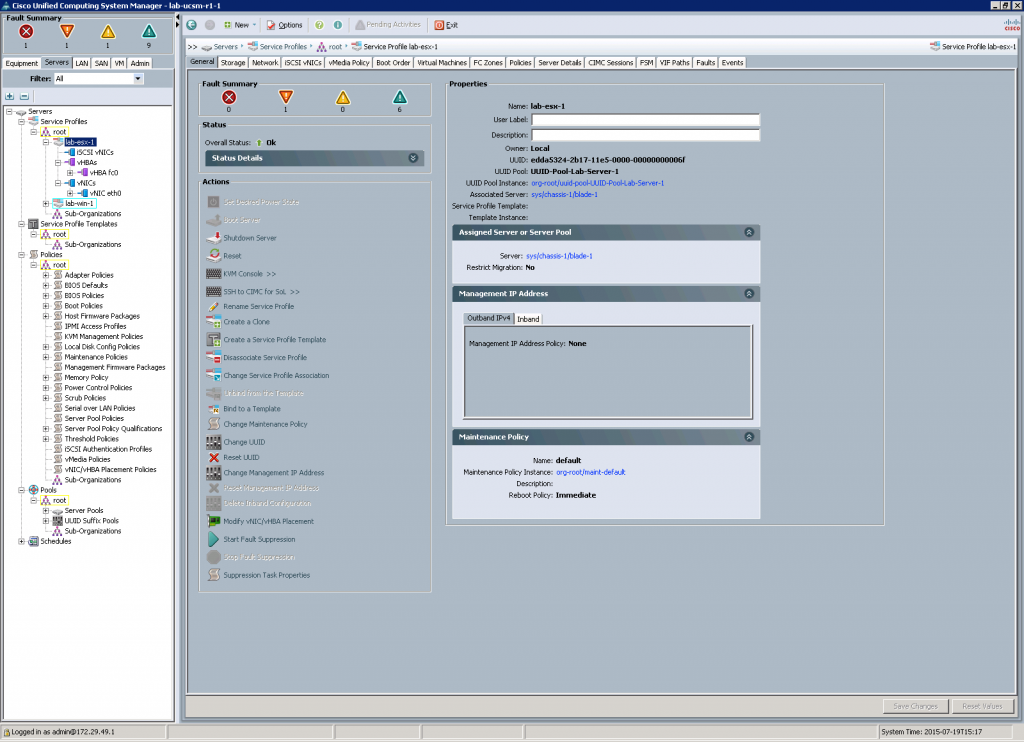

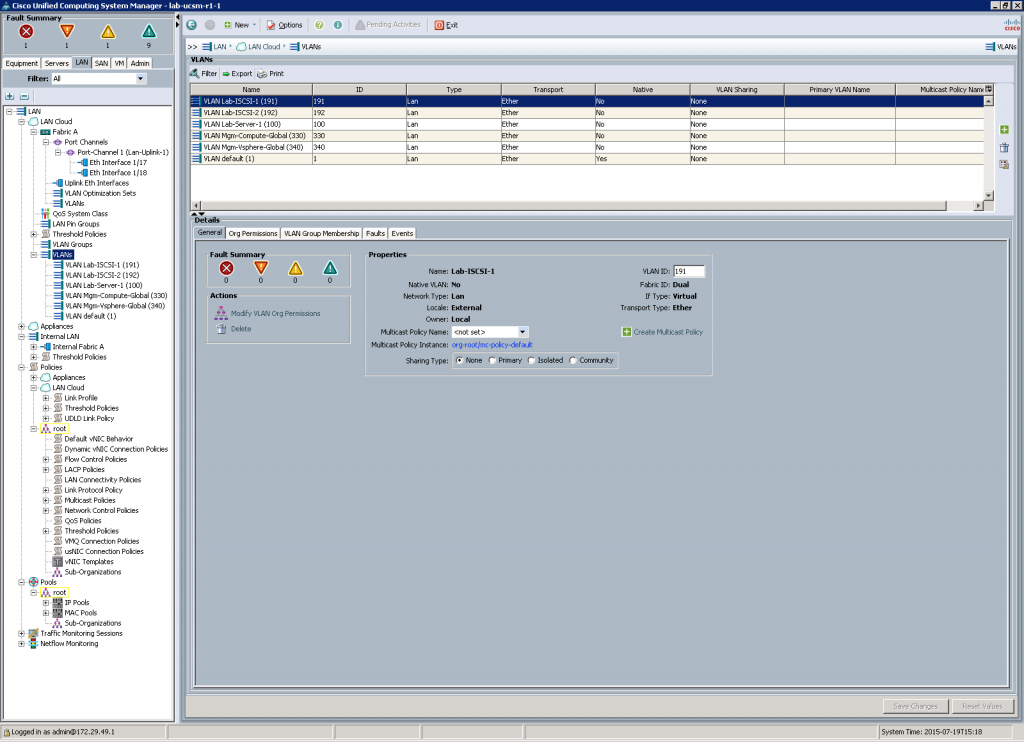

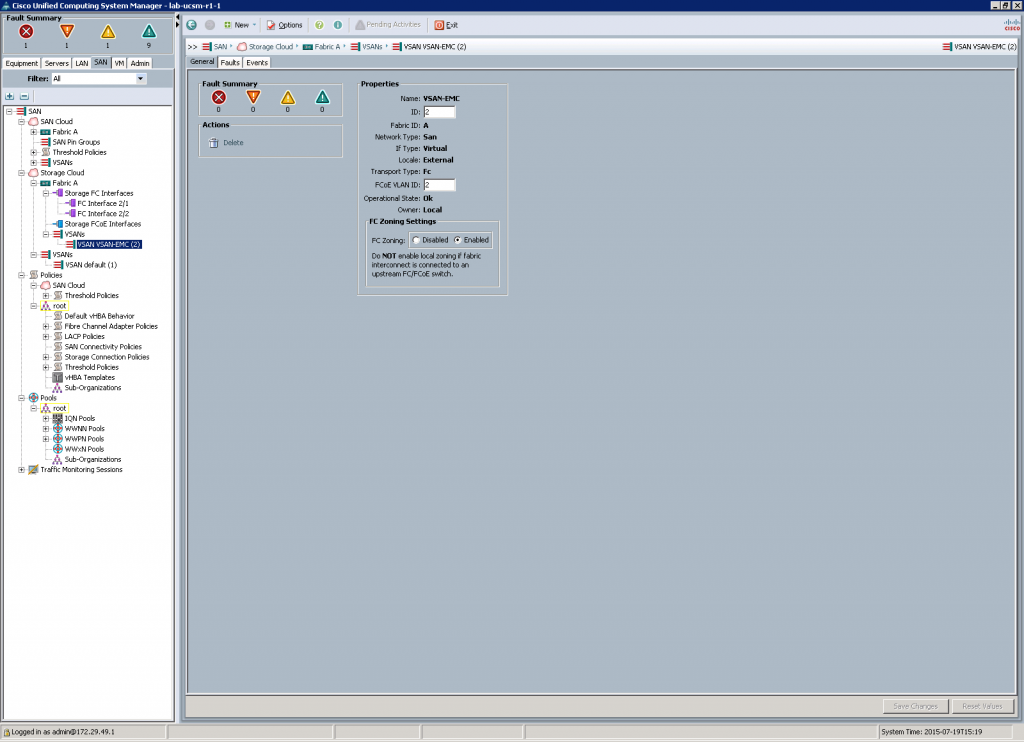

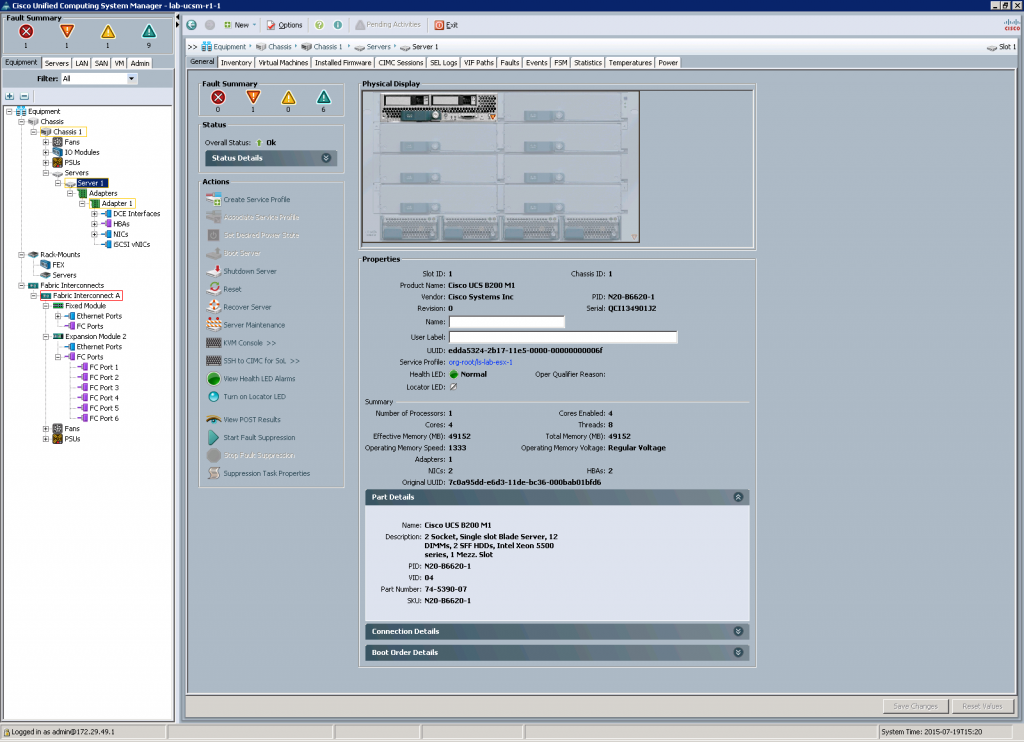

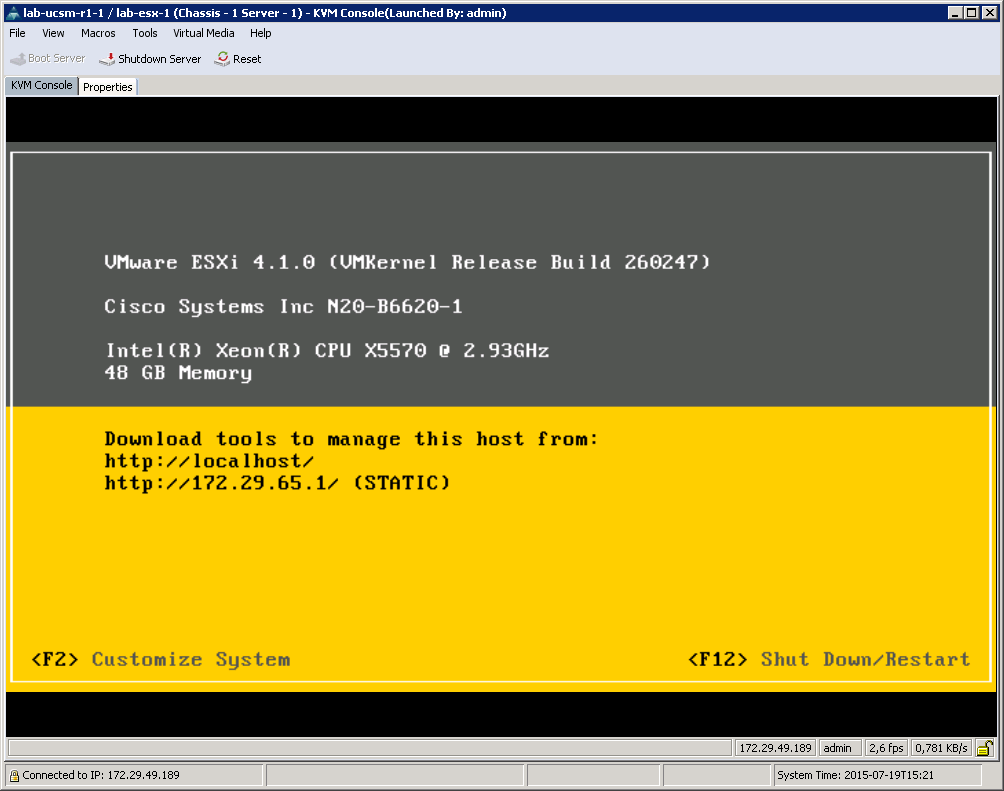

it’s a long while ago I posted here. If a blog don’t deliver content since 2 years, it’s dead ! And yes this blog is dead since 2 years. But I’m not dead and my DataCenter at Home (DCaH) project isn’t dead, too. My UCS, my NetApps and all other hardware are in great condition. 🙂 But my job changed and this is perhaps one reason why all my nice toys aren’t the middle of my life anymore. 😉 That’s life !

Don’t worry ! There are some changes on the horizon. 🙂 My future isn’t datacenter centric any more. I have to deal with software and software architectures. It’s cool and it’s very interesting. But my heart loves hardware. Now, there’s a big change in my life. I cannot stay in my current home and need a new one. I’ve – me and my family found – found a new one… and it has a nice cellar. 😉 A very nice cellar. 😀

As mentioned above I don’t need all that datacenter stuff for my job. But I don’t want to sell all my jewels. 🙂 And this is the beginning of a new chapter of this blog and of my DCaH project. 🙂

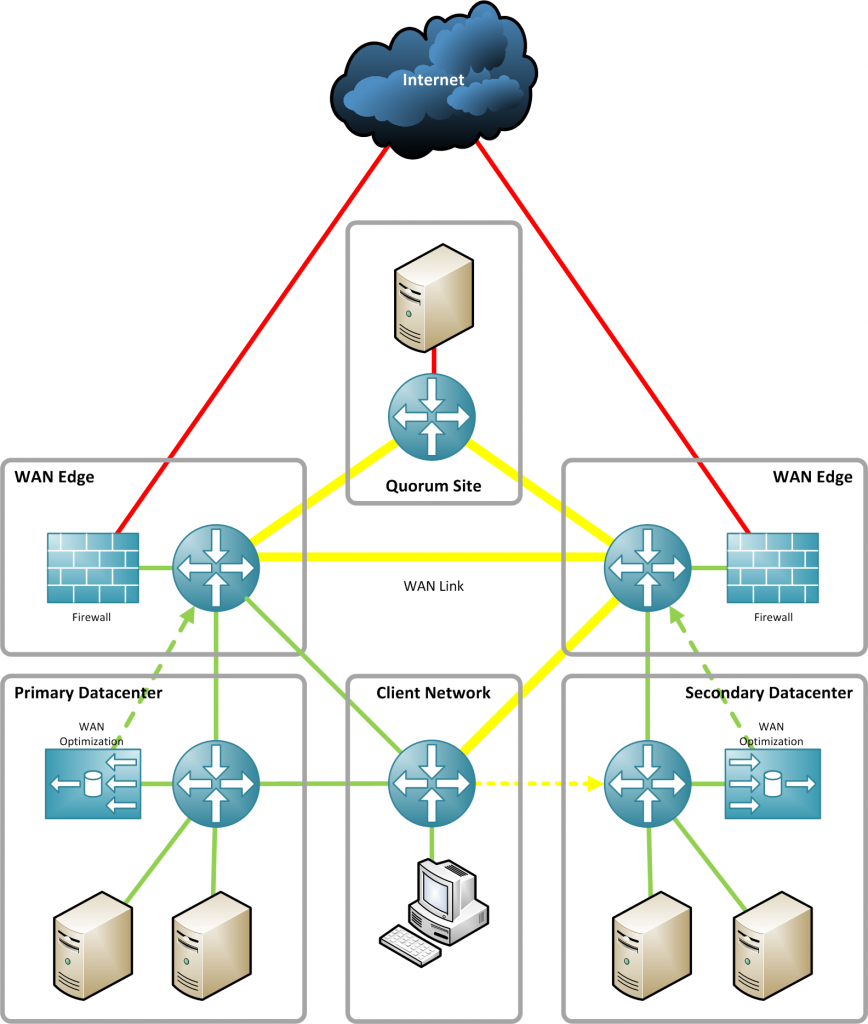

There are a lot of people who are working on a model railways. And I like to do the same, but not with railway toys! Why not build a model (mini) datacenter? 😉 Of course it’s a little bit outdated, but a model railway is outdated, too. 😉 I can spent my time on nice details and I can work on a perfect Home Datacenter. With fine cables, nice infrastructure and lot’s of other cool things. I’m so excited about this new project and I hope you will enjoy it. 🙂

My current plan is to start with my new project in 3 to 5 month. And I promise that I will deliver new content . 🙂 Lot’s of pictures, fun and a DataCenter at Home project that’s goal is not to be a current and modern, state-of-the-art datacenter, but a nice one. 🙂

Stay tuned, don’t expect guides and tips, but a nice journey to a perfect model mini datacenter at home. 😉

Kind regards

Tschokko